|

1/10/2024 0 Comments Datagrip kafka Then we provide the required Kafka information (brokers url, topic, schema registry.) So let’s create our index.js file (in the same directory), which will look like this:įor starters, we need to import all of our required packages for this to work.

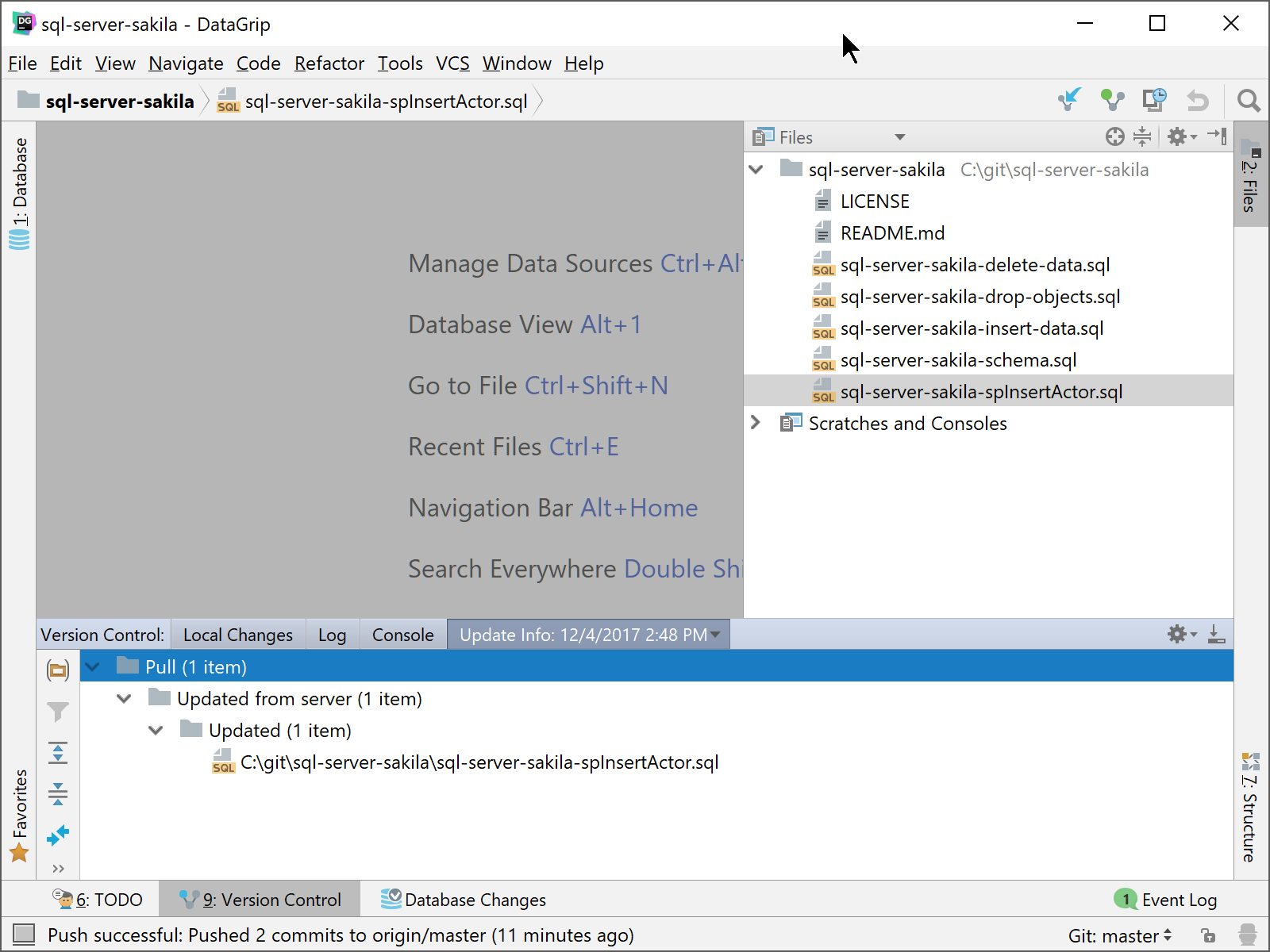

So now by typing “npm run dev”, we actually run “node index.js” and execute the code that we have in our index.js file, which we need to create. The last thing we need to do with our package.json file is to create our run script (the command that we will be using to run our application) is edit our scripts: We will use Express to preview our data in the browser, Kafkajs to connect to Kafka, and to connect to our schema registry and be able to deserialize the message. This will update our package.json file with the dependencies included: Let’s then install the dependencies that we will use, by typing on our terminal: This will create a package.json file for us, looking like this: Let’s start by opening up a terminal window and initializing our project in a new directory by typing: We are presuming that we already have npm and nodejs installed on our local machine but if that's not the case, download them here. We will consume the data in a local nodeJS application, spin up an Express Server, and preview the data in our Browser. Lastly, let’s consume the data from our topic. Some instructions can be found here on stackoverflow and crunchify. You need to map your localhost (127.0.0.1) to XXXXXXXX. Or in case you are using VScode you can right-click the file and select "Run Java" (make sure you have installed the Extension Pack for Java in case the option is not appearing).Īt this point, you should see the "Sent Data Successfully" message to your console, which indicates that we have sent data to Kafka as expected. To test things out, let's package our code by typing the following in a terminal window at the project location folder:Īfter receiving the "BUILD SUCCESS" info on our terminal, let's execute it with: Finally, we add the data to a record and send our records to the Kafka topic. Then, we construct some card objects using random data and we serialize the objects to Protobuf. We then specify the properties of the Kafka broker, schema registry URL, and our topic name: protos_topic_cards.

In the above snippet, we start by naming our package and importing the libraries that we will need later on. Opening our SendKafkaProto.java file we will see:

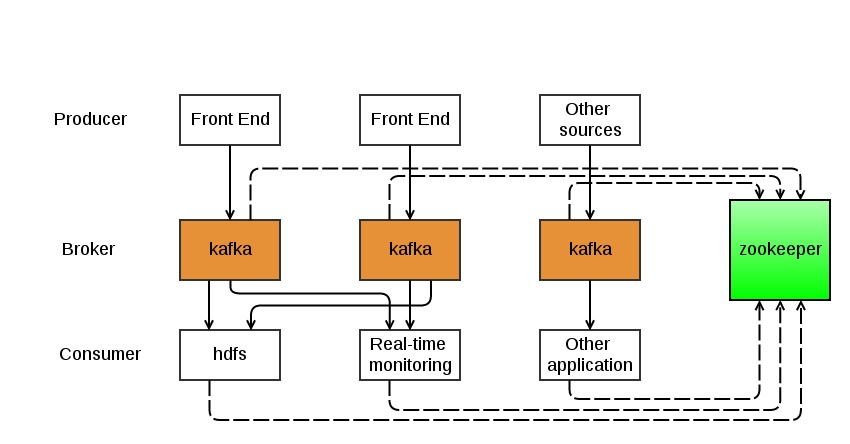

Consume the data through a Node.js application Build a basic application that produces Protobuf data (in Java) To see an end-to-end (local) flow, we will: Let’s go over an example of interacting with Protobuf data. It provides first-class Protobuf support:Įxplore & evolve schemas across any Kafka infrastructureīuild stream processing applications with SQL syntax. A schema like Protobuf becomes more compelling in principle, transferring data securely and efficiently.Ĭonfluent Platform 5.5 has made a Schema Registry aware Kafka serializer for Protobuf and Protobuf support has been introduced within Schema Registry itself.Īnd now, Lenses 5.0 is here. Loose coupling in Kafka increases the likelihood that developers are using different frameworks & languages. If Protobuf is so commonly used for request/response, what makes it suitable for Kafka, a system that facilitates loose coupling between various services? So Protobuf makes sense with microservices and is gaining a wider appeal than AVRO.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed